“Lip-reading is one of the most challenging problems in artificial intelligence, so it’s great to make progress on one of the trickier aspects, which is how to train machines to recognise the appearance and shape of human lips,” says Harvey. An element of language modelling is also used to train the computer to recognise the context of words spoken. This produces a success rate of 80 percent with a single speaker, and 60 percent with two different speakers. The team has a database of 12 people at the moment, using a list of around 1,000 words.

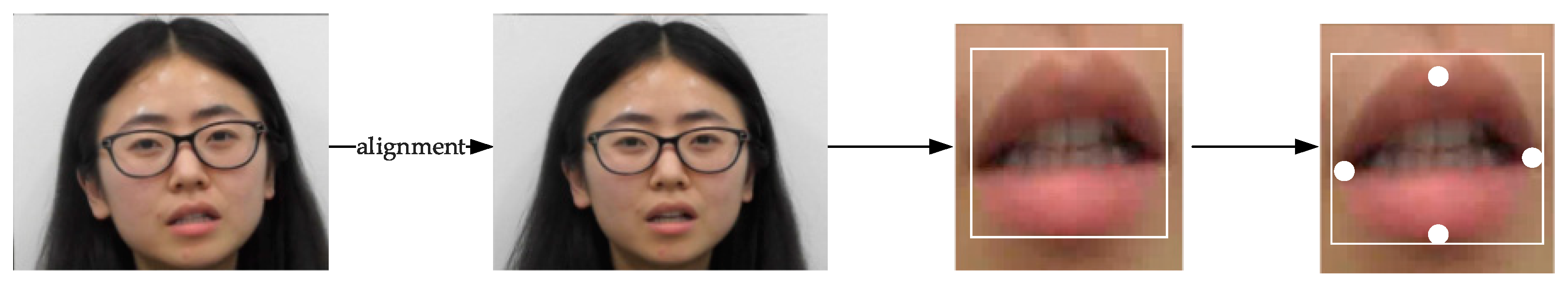

Researchers “train” the system using one person’s lip movements, then test it on another person’s lip movements. The technology uses deep neural networks that “learn” the way people move their lips, explains Professor Harvey. The technology can also be used where there is audio but it is difficult to pick up because of ambient noise, such as in cars and aircraft. She says that unique problems with determining speech arise when sound isn’t available – such as on CCTV footage – or if the audio is inadequate and there aren’t clues to give context to the conversation. Training system to recognise lip movements Helen Bear and Professor Richard Harvey of UEA’s School of Computing Sciences, can be applied “any place where the audio isn’t good enough to determine what people are saying,” says Dr. The visual speech recognition technology, created by Dr. Scientists at the University of East Anglia in Norwich, England, are working on the next stage of automated lip reading technology that could be used for deciphering speech from video surveillance footage. Images, but the University of East Anglia is pushing the next stage of this technology

Automated CCTV lip reading is challenging due to low frame rates and small